Galaga (小蜜蜂)

At this point of time, I need to select a Nintendo Famicom Mini game to train the AI agent. As mentioned earlier, the problem is that I need to be able to parse game screens (image data) to determine the score (reward signal for reinforcement learning) and whether the game is over (terminal state).

Nintendo Famicom Mini has 30 games built-in. I ended up picking ‘Galaga’, which is also called ‘Little Bee’ in Chinese.

The reasons are straightforward.

- ‘Galaga’ is one of the most iconic games of the classic Nintendo console.

- I’ve previously trained DeepMind’s DQN on Jetson TX1 to play another Shoot ‘em up game, i.e. ‘Space Invaders’. I think the AI agent would likely work for ‘Galaga’ too.

- Most importantly, it seems relatively easy to programatically parse Galaga game screens to determine game states and rewards, etc.

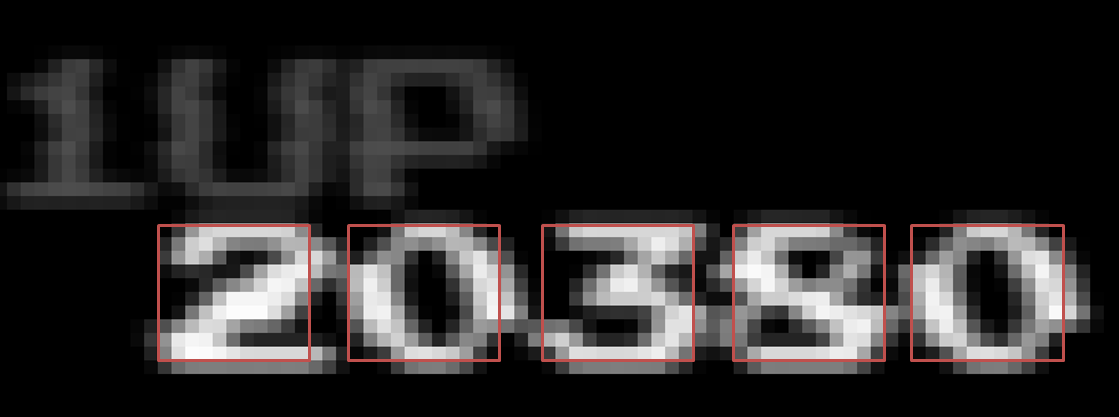

So I saved a few ‘Galaga’ game images with qlua test/test_vidcap.lua -save. Here is one example.

Next, I used an image editing software to find out location of game scores, lives (remaining number of fighters), “HIGH SCORE”, “- RESULT -“ (for GAME OVER) and flags, and also cropped the corresponding image patches. I saved all this information and the image patches in a Lua table and saved it to a file named ‘galaga/galaga_image.t7’.

In the galaga.lua module, I created functions such as get_score(), get_lives(), has_HIGH(), has_Flag() and has_RESULT(). I can then use this set of functions to determine game states easily.

Again, I put test code in the ‘test’ subdirectory. It should be run from the project root directory.

$ qlua test/test_galaga.lua

Feel free to check out the full source code in ‘galaga’ subdirectory of my GitHub repository.

https://github.com/jkjung-avt/dqn-tx1-for-nintendo

Finally, the way how I parsed the score from game images is probably worth some mentioning. I need to do this very efficiently since I’d likely need to do this on every frame of the video. So I have to find a way to balance accuracy versus required computation.

As shown on the image above, I’ve marked the exact location of all the digits in score. I’ve also cropped image patches for ‘1’, ‘2’, ‘3’, …, ‘9’ and ‘0’ respectively. When parsing the score, I compare each digit location with those patches and find the one with the shortest Euclidean distance, or 2-norm distance. I then combine all digits together to get the score. I actually tried a few differernt approaches and found this Euclidean distance method worked reliably enough and was quite efficient.

Here is the exact Torch7/Lua code I use to determine whether the digit (in the rectangle patch) is 0~9.

-- Translate a rectangle image to a single-digit number, by comparing the

-- image with pre-saved digit images one by one, and find the one with

-- the shortest 2-norm distance. Note galaga_image.digit[10] is the

-- pre-saved image of '0'.

local function rect_to_digit(rect)

-- assert(rect:isSameSizeAs(galaga_image.digit[1]))

local diffs = torch.DoubleTensor(10)

for i = 1, 10 do diffs[i] = torch.dist(rect, galaga_image.digit[i]) end

_, m = torch.min(diffs, 1)

return (m[1] % 10)

end